Types of review.

The different types of review designed to manage current scientific information overload are described. The post analyzes the critical differences between narrative and systematic reviews and describes specific methodologies such as scoping, rapid, and umbrella reviews, ultimately providing a guide to selecting the appropriate design based on available resources, time constraints, and the research question.

We all have that infamous drawer at home, that sort of domestic Bermuda Triangle. Yes, you too. That ‘catch-all’ drawer that is really a ‘catch-hell’, where a Nokia 3310 charger (just in case the apocalypse returns) coexists with some corroded batteries, a few movie tickets from 2014 (as blurry as your memory of that night) and an orphan button that matches absolutely no shirt in your wardrobe.

Peering inside is terrifying. Trying to find something useful without pricking a finger on a thumbtack or losing your sanity requires courage, but above all, it requires a method. If you apply the “rummage blindly and see what happens” method, you will end up with frustration, an HDMI cable tangled around your very soul, and, most likely, nothing useful in your hands.

Now, let’s perform an exercise in abstraction and raise that drawer to the nth power: imagine it doesn’t measure 40 centimeters, but is the entire internet. Instead of old batteries, it fills up every day with thousands of new scientific studies. And, while some are nuggets of pure gold, like our working charger, others are garbage disguised as science, much like our old movie tickets.

If we try to seek answers in that ocean of information using the “rummaging the drawer” method, that is, taking the first thing Google spits out or whatever a brother-in-law shares on WhatsApp, the result can be totally useless. Information overload is the new digital hoarding, and believe me, although there is plenty of space in the brain, there isn’t enough room for that much trash.

But do not despair. Fortunately for us, a series of tools have been developed that allow us to navigate this compulsive accumulation of data as if we were Marie Kondo clad in a lab coat.

In this post, we will deal with these tools of extreme cleaning: we will look at the types of reviews that have been developed, their characteristics, and how not all of them clean the same way; some just dust the surface, while others use ultraviolet light to detect the stains no one wants to see. Read on, and we will learn to distinguish a deep clean from the simple act of sweeping the dirt under the rug.

The guru vs. the machine

To start talking about types of reviews, we have no choice but to begin with the great dichotomy that separates the world of reviews into two continents: the systematic review versus the narrative review.

These reviews, which we discussed in a previous post, represent two philosophies, two opposing approaches to synthesizing what we know. Therefore, the first mandatory step is understanding the differences between the two. It is like learning to distinguish between advice from your cousin and a report from a forensic expert. Both might be interesting, but only one should guide your most important decisions.

Narrative review: the guru who tells us his (great) story

I’ve told you before about a brother-in-law of mine who is like a walking encyclopaedia. At family dinners, he can give you a fascinating talk on the history of antibiotics, the evolution of vaccines, or why broccoli is, in reality, a conspiracy. He is brilliant, entertaining, and gives you a fantastic panoramic view. That is, in essence, a narrative review (also called traditional or author’s review).

This type of review is written by an expert in the field whose purpose is to offer a broad and critical view of a topic. They can be useful for diving into a new field, exploring how a concept has evolved over time, or understanding different theoretical approaches to a problem. The question they usually answer is open and exploratory, something like: “What is known about the pathophysiology of the dreaded fildulastrosis?”

The author, using their expert judgment, selects the sources they consider most relevant and weaves them into a story that is usually coherent and easy to follow. Hence its top-tier pedagogical utility regarding general aspects of a topic we don’t know well.

But this feature, which represents one of the advantages of the narrative review, is also its Achilles’ heel: everything depends on the author’s judgment. The study selection process is neither transparent nor reproducible. This throws the door wide open to two methodological demons: selection bias and retrieval bias.

Selection bias occurs when the author, consciously or unconsciously (that’s why they’re a guru), chooses studies that support their point of view and omits those that contradict it. This reminds us of a sad universal truth: there is no love more blind, deaf, and stubborn than that of an academic in love with their own hypothesis.

The consequence of retrieval bias is that there will be no way to know if relevant evidence has been excluded. Was that key study contradicting the whole narrative left out on purpose or simply out of ignorance? Only the guru knows (… or not).

In short, the narrative review is like a landscape photograph taken by an expert photographer. It might be a beautiful and informative image, but it is their vision of the landscape. It is not the whole landscape.

Systematic review: where data kills the narrative

Now, imagine a homicide detective. She doesn’t rely on intuition. She has a method. She collects all the evidence, tags it, analyzes it, and follows a strict protocol so that no detail escapes her, so that any other detective can replicate her steps and reach the same conclusion. That is the systematic review (SR), the gold standard for evidence synthesis in evidence-based medicine.

In this case, the goal is not to tell a story, but to minimize bias through an explicit, transparent, and, above all, reproducible methodology. And, contrary to what the narrative review does, the SR seeks to answer a very specific and narrow clinical question, not a general topic. Its great power lies in a series of methodological pillars that we will see below.

First, a SR tries to answer a specific structured clinical question, which follows the PICO format (Population, Intervention, Comparison, Outcome). For example: “In adults with higher education (P), does intensive exposure to reality shows featuring screaming couples (I) compared to a documentary about the dung beetle (C) cause a greater reduction in faith in humanity (O)?”. This precision is what allows us to compare studies without mixing apples and oranges.

Second, conducting an exhaustive and sensitive search. Systematic and documented searches are performed across multiple databases, and they go even further, searching in the so-called “grey literature” (theses, conference proceedings, etc.), registries of unpublished studies, and so on, to decrease the risk of publication bias (for example, the tendency for only studies with positive results to get published).

We could call the third pillar the rule of two: to avoid human bias, the most critical steps of the process (study selection and data extraction) are performed independently by at least two reviewers. If there are disagreements, a third party acts as a referee (usually the boss, who is surely the smartest one on the team). This ensures objectivity and reduces errors.

Finally, before starting the SR, the entire review plan (the question, search methods, inclusion and exclusion criteria) is detailed in an explicit protocol. Ideally, this protocol is publicly registered on platforms like PROSPERO. This prevents researchers from changing the rules of the game halfway through the match if the results aren’t what they expected.

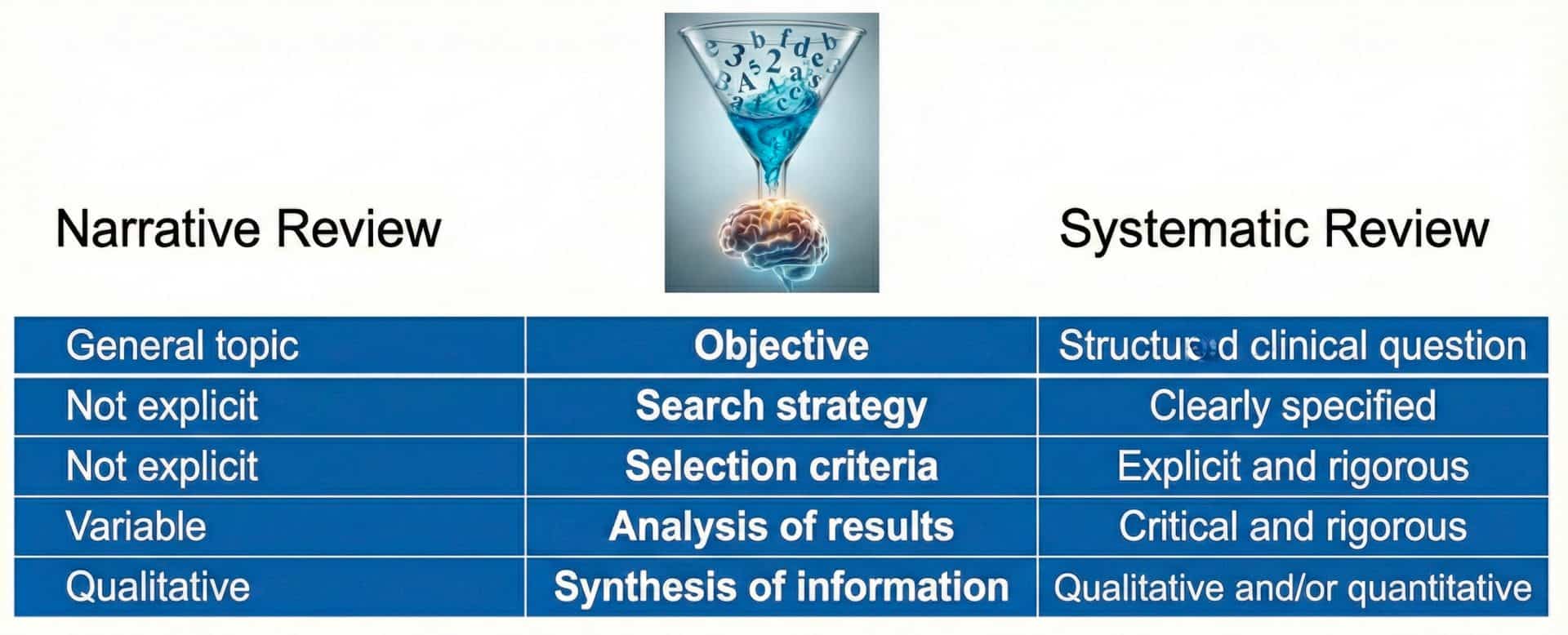

In the attached table, I summarize the differential characteristics between the SR and the narrative review. Understanding these differences will put you ahead of 90% of the clinicians who read review articles. But don’t relax just yet. Beyond these two giants, the jungle of evidence synthesis is full of other fascinating and, at times, confusing creatures.

Maps, haste, and russian dolls

As evidence-based medicine has matured, researchers have realized that not every question can be answered with the hammer of the traditional systematic review. Sometimes we need a screwdriver, sometimes a map, and sometimes a drone to view the landscape from above. This has led to an explosion of new types of reviews, each adapted to a specific need. Knowing them will allow you to understand what kind of answer each one can give you and what their limits are.

Scoping review: the explorer’s map

Let’s imagine now that we arrive at an unknown island. Our first objective is not to analyze the efficacy of a new hunting method, but to map the terrain: how big is the island? What kind of flora and fauna are there? Are there already any trails? That is exactly what a scoping review does.

Its goal is not to answer a specific question about the effectiveness of an intervention, as the SR did, but to systematically explore and map all available literature on a field to describe its breadth, nature, and characteristics.

This type of methodology is suitable for emerging research fields, those that are poorly defined, or where the literature is very diverse and heterogeneous. For example, imagine you want to investigate the phenomenon of Silicon Valley biohackers. You aren’t looking to know if they will really live to be 150 years old (spoiler: probably not), but simply to map what the heck they are doing. Are they injecting young unicorn blood? Are they taking ice baths while reciting binary code? Here, you don’t judge efficacy; you just want to draw the map of the madness and see what interventions exist and what variables they have dared to measure.

These scoping reviews serve, therefore, to clarify concepts and identify knowledge gaps, and they are often the precursor step to a SR. First, we map the terrain with a broader research question, of the PCC type (Population, Context, Concept). Once we find a promising zone, we launch a deeper expedition: our esteemed SR.

Rapid review: the sprinter of the family

Sometimes, an answer is needed now, without waiting the time it takes to conduct a full SR. For these urgent situations, there is the rapid review, which is essentially the fast-food version of evidence synthesis.

Let’s imagine a somewhat dreamlike and rather absurd situation. It is December 29th, and you need to know immediately if eating 12 grapes in 12 seconds statistically increases the risk of choking or if it’s just an urban legend, because New Year’s Eve dinner is in two days. You don’t have a week to read everything. So, you conduct a rapid review: you search only on Google Scholar, only articles in Spanish, and only from the last 5 years. You might miss a key study published in a Korean journal in 1998, but you sacrifice that exhaustiveness in exchange for having an answer before the midnight bells ring.

Thus, we can say that a rapid review is a synthesis designed to answer questions within a very tight timeframe, typically 1 to 6 months, so that decision-makers have some evidence to lean on. But we already know that no matter how we do it, we still have to dive into the ocean of information, so we ask ourselves: how do rapid reviews manage to shorten retrieval times without losing excessive quality in what is retrieved?

The answer is that they achieve this by applying a series of methodological shortcuts, such as restricting the search to one or two databases (for example, only PubMed/MEDLINE), limiting the search by date or language (e.g., only articles from the last 5 years in English), not performing duplicate selection and data extraction (a single reviewer does the work to save time), and omitting the public registration of the protocol (as is done in the case of SRs).

But I don’t want you to think that these reviews lack a method; rather, they consciously simplify the SR method to gain speed. The downside is evident: lower methodological reliability.

A rapid review is better than a decision based on nothing, but its conclusions must be taken with more caution than those of a full SR. One must always read the methods section to see exactly which shortcuts were taken.

Umbrella review: one review to rule them all

Sometimes we encounter topics that have been investigated so frequently that we don’t just have piles of primary studies, but also a surplus of systematic reviews on the very same subject.

As an example, let me tell you about the soap opera of probiotics for infant colic, a drama we’ve been immersed in for several years now. Season 1 said they worked, Season 2 said “no way,” and the spin-off suggests it depends on the alignment of the planets. There is so much literature on the subject, including SRs, that trying to read them all, many of which are contradictory, will give you more colic than the infant itself.

This is where the umbrella review steps in, the Sauron that comes to rule over all reviews, to review the reviewers, and to tell us if we are looking at a medical miracle or simply at bacteria with an excellent marketing team. Its goal is to gather, synthesize, and analyze the findings of multiple previous SRs.

The umbrella review proves very useful when a very high-level overview of the evidence is needed. It operates at the peak of the pyramid, allowing us to compare the results of different reviews, identify areas of consensus or controversy, and understand why some reviews reach different conclusions. An umbrella review can provide the panoramic view we need to understand why there are discrepancies and what the current consensus actually is.

But not everything is so wonderful. The main methodological challenge of these reviews is managing the overlap of primary studies. It is very likely that different SRs have included the same clinical trials, and if this isn’t handled with care, you can end up counting the same evidence multiple times, which would distort the conclusions.

Show me your resources and i’ll tell you which review you need

If you have read everything above carefully, you will understand that selecting the right type of review is not a matter of personal preference, but a decision that depends fundamentally on available resources, time, and the scientific objective we are pursuing.

The first thing we must evaluate is our team’s capacity. If we are working alone or do not have at least three people to perform impartial article screening, or if our intention is not to compile absolutely all the evidence on a topic, the logical path is to conduct a narrative review. This format is ideal for addressing general topics, such as an update on the pathophysiology of arterial hypertension, where the author’s experience adds context without the need for strict protocolized exhaustiveness.

If, on the contrary, we have a work team, the next limiting factor is time. A rigorous review can require between 12 and 18 months. However, clinical reality sometimes imposes much tighter deadlines. If we need to answer an urgent question for decision-making and do not have that long year of buffer, the correct option is the rapid review. In this scenario, we accept the application of certain methodological shortcuts and the limitation of the search to obtain applicable results in a reduced time frame.

When we have both time and a team, we must look at the nature of our question. If we face a broad, heterogeneous topic or one with multiple research questions, the indicated tool is the scoping review, which will allow us to categorize the evidence and detect research gaps without needing to synthesize efficacy results.

On the other hand, if our goal is to bring order to a field where many systematic reviews are already published, perhaps with contradictory results, we should opt for an umbrella review, specifically designed to review other reviews.

Finally, if we have the necessary resources, we have time, and we have a specific, well-formulated research question, the path to follow is the systematic review. This design will allow us to evaluate the evidence in an impartial and reproducible way to inform clinical practice or health policies.

We’re leaving…

And that is all for today.

We have come a long way from that junk drawer full of cables and movie tickets with which we started. We have learned to distinguish between the story an expert tells us with a glass of wine in hand (narrative review) and the forensic investigation of a crime scene (systematic review). We have also survived the jungle of acronyms, discovering that for every need, whether it be urgency, mapping, or information saturation, there is a specific tool, from rapid reviews to umbrella reviews, passing through scoping reviews. Now we have the complete map so as not to get lost in the labyrinth.

We haven’t said a word about a relatively frequent confusion that tends to haunt beginners: believing that SR and meta-analysis are the same thing. And no, they are not. The SR is the rigorous method of search and selection, which may remain a qualitative synthesis if the studies are too heterogeneous, or take the leap to quantitative synthesis when the data are comparable and homogeneity allows it, unleashing all the magic of the meta-analysis. But that’s another story…