Artificial intelligence tools.

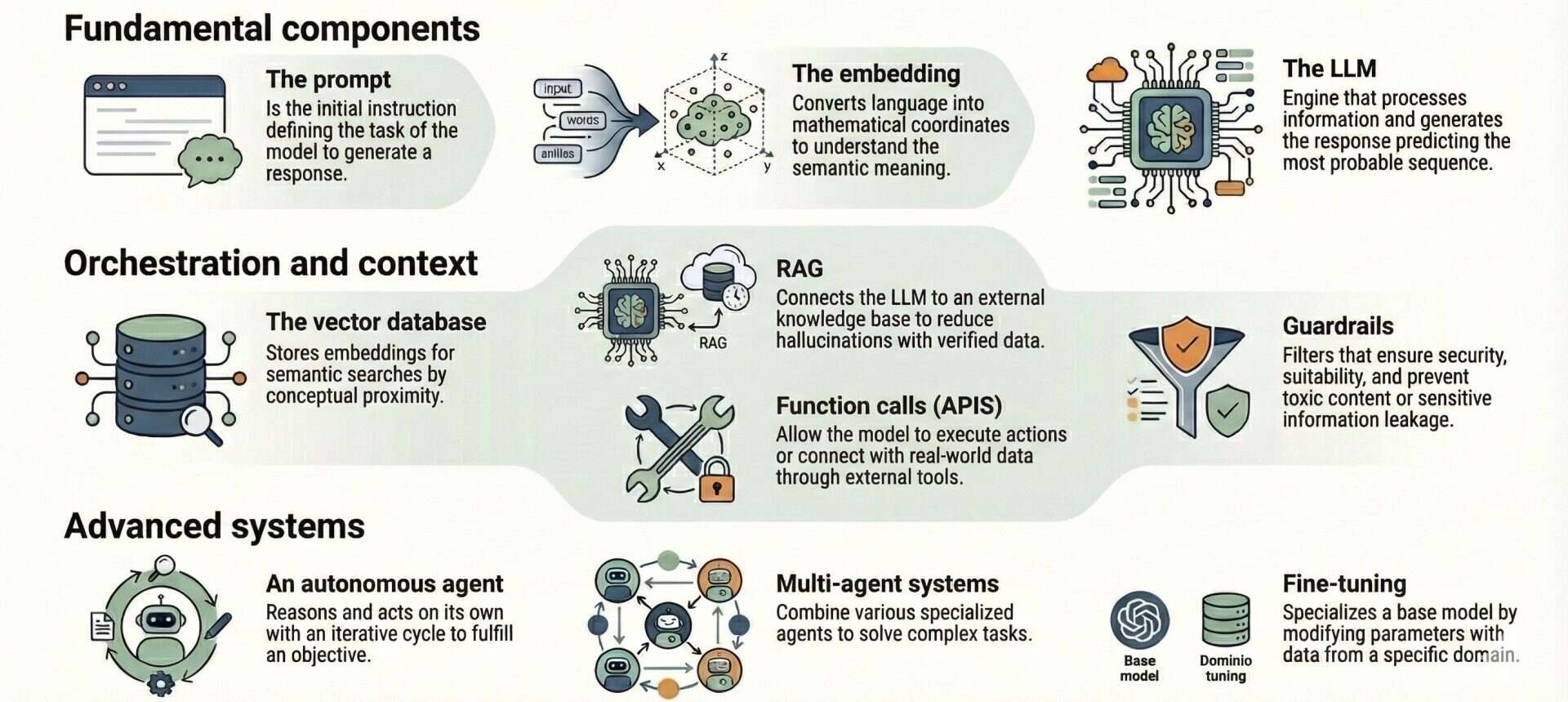

Current artificial intelligence tools operate on complex modular architectures that transcend the basic language model. The essential technical components for their coordinated operation are reviewed, starting with the use of embeddings and vector databases as a foundation for semantic search and the implementation of RAG, advanced orchestration mechanisms, and the development of autonomous agents and Fine-Tuning processes for specialization in specific domains.

On the night of May 29, 1913, the Théâtre des Champs-Élysées in Paris became the stage for a full-scale battle. No, it wasn’t a political revolution, but the premiere of Igor Stravinsky’s The Rite of Spring. The music was so radical, so full of dissonance and “barbaric” rhythms, that half the audience began screaming insults while the other half shouted for silence.

The dancers, unable to hear the orchestra over the roar of boos and punches in the stalls, had to follow their steps by counting in their heads while the choreographer beat out the rhythm from the wings. It didn’t sound like music; it sounded like pure noise, chaos, and auditory anarchy.

The thing is that avant-garde movements often have to suffer the misunderstanding of their audience. It’s like what happens to science every time it experiences new breakthroughs. When we face new technologies, the “noise” of novelty can prevent us from hearing the underlying melody. For instance, trying to understand how a complex algorithm works or deciphering the validity of a massive data study without the proper methodology is like trying to find a bassoon’s leitmotif while the gentleman in the next seat is screaming in your ear.

But today we aren’t going to talk about theaters; instead, we’ll talk about terms related to artificial intelligence (AI) tools. The current chaos of algorithms, data, and incomprehensible acronyms is much more like the din of a dinner service at rush hour: broken plates, shouted orders, and steam everywhere.

So, let’s put away our opera glasses and put on our chef’s hats to enter the noisiest kitchen of the moment. Welcome to the AI kitchen, where acronyms fly like broken dishes. But don’t worry, don’t panic amidst all the noise and pressure cookers, we’re going to bring a little order to find the hidden recipe in this disaster. Sharpen your knives; we’re going in.

Kitchen nightmares

Walking into a conversation about current AI technology is like sneaking into a professional kitchen in the middle of a dinner service: there’s a racket everywhere, someone is shouting “RAG!”, another responds “embeddings!”, and a third seems to be picking a fight with an “agent.” Everyone assumes you know where the salt is and what the heck a “guardrail” is, but the reality is that most people don’t even know how to turn on the oven.

To bring some order to this culinary madhouse, we’re going to forget about black boxes and draw the floor plan of a well-organized kitchen. What matters here is where things are stored, where the onions are chopped, and who’s in charge of the stoves. This structure will allow us to dissect any AI “dish” they serve us.

Aquí tienes la traducción de esta sección técnica, manteniendo el paralelismo culinario y el tono didáctico del blog:

The pantry and the basic staff

Before lighting a single burner, we need to know what we have to work with. In our AI warehouse, we store the “atomic” elements, those that cannot be split further without breaking the physics of the kitchen.

One of the most famous is the prompt or instruction. There is no mystery to the prompt; it is, quite simply, the order sheet with the instructions we pass to the AI. To put it clearly, it’s like the ticket we hand over to the chef.

In the biomedical field, the specificity of the order is vital. It’s not the same to shout “One order of pain, coming up!” as it is to write: “Act as a physician and summarize the utility of semaglutide for heart failure in patients without diabetes.”

The prompt is a purely reactive element: if you change one word of the order, the dish that comes out is completely different. Regardless, you should also know that, much like good cooks, the same AI tool can produce a slightly different dish given the same order. This is due to the randomness implicit in how these models work: although the answers should be semantically similar, they won’t be exactly the same every time.

The second indispensable element in a good AI kitchen is the embedding. This is where the chef’s nose comes in, capable of recognizing the different flavour profiles of their dishes. How does a machine know that “myocardial infarction” and “heart attack” pair the same way? Thanks to embeddings.

Speaking more technically, an embedding model transforms every fragment of text it receives into a vector, that is, a series of coordinates in a multidimensional mathematical space. Imagine an infinite flavour map where not only “appetizing” and “inedible” exist, but hundreds of different axes: one measures “sweetness,” another “acidity,” another “texture,” and so on up to a thousand invisible characteristics.

In this multidimensional geometric map, the embedding model places each fragment in an exact location based on its use and context, so that fragments with similar meanings end up physically landing near each other.

In short, embeddings convert the ambiguity of language into precise mathematical coordinates within a multidimensional vector space. By assigning each ingredient a digital numerical fingerprint based on its qualities, the AI can ignore spelling and focus on semantics. This is how it manages to detect that ingredients with very different labels, such as “cephalgia” and “headache,” actually belong to the same flavour family.

And thirdly, inside this kitchen’s pantry, we have our star chef: the large language model (LLM).

If we put on our lab coats for a second (over our aprons), an LLM is nothing more than a giant neural network trained on terabytes of text. Its technical functioning is based on probability: it has read so many recipes, books, and conversations that it is capable of predicting what the most logical next word (actually, a token) is in a sequence. Imagine a cook who, even if they’ve never tasted a dish, knows statistically that after “cracking eggs” and “adding salt,” what follows with a 99% probability is “whisking” and not “putting them in the dishwasher.” It doesn’t reason like a human, but it mimics reasoning so well it pulls it off.

The LLM is the culinary engine, the only one with the capacity to read the order, understand the flavour profiles, and execute the technique to get the dish out. Without it, the kitchen is empty.

Currently, there are many chefs available, some specializing in certain types of cuisine. Perhaps the most famous and versatile is GPT (OpenAI), with Gemini (Google) hot on its heels (or overtaking it). Others well known are Claude (Anthropic), famous for its coding ability, or Llama (Meta) and Mistral (MistralAI), the open-source chefs, to name a few.

There are also chefs who let you see their tricks and that you can take home to cook with you, without depending on anyone. That is, you can download them for free and use them on your own computer, provided you have enough computing power.

Esta sección es un poco más larga y técnica, pero el símil de la mise en place funciona a la perfección en inglés. Aquí tienes la traducción:

The mise en place

As the legendary chef Auguste Escoffier once said, the “putting in place” of all ingredients and utensils is the sacred order of professional organization in a good kitchen.

It’s not just about chopping onions. You must read the recipe, gather the necessary tools, weigh the ingredients, wash, peel, cut, and place everything in bowls or containers ready to use… The goal is to avoid chaos. If you have a good mise en place, you don’t have to stop while cooking to look for the salt or peel a potato; you just execute, which allows for speed and precision, especially under pressure.

Something similar happens when we use AI tools: having the data organized and the tools ready makes it possible for the LLM to cook the answer without errors. In this area of our AI kitchen, we have four fundamental elements.

The first one we’ll talk about is the vector database, which works like a well-organized spice rack. If we have millions of vectors in the embedding space (those flavour profiles we mentioned earlier), we can’t just have them tossed in a drawer. We need a storage system optimized for semantic search.

This is where geometry meets gastronomy. Unlike a classic database (think of a typical Excel sheet) that looks for exact matches (if we search for ‘salt’, the system will fail if it’s labelled as ‘sodium chloride’) vector databases calculate distances.

Every concept processed by the embedding model becomes a vector within a multidimensional space. In this space, mathematical algorithms (such as cosine similarity, Euclidean distance, or k-nearest neighbours search) are used to measure how close two vectors are. This is what allows the system to instantaneously find the ingredient that conceptually pairs best with your order in milliseconds, without the words needing to match letter-for-letter.

Now let’s think about a catastrophic (though unlikely) situation. Imagine a chef with a lot of imagination and very little memory who invents a paella with chorizo (the dreaded hallucinations). To avoid something like this, we can force them not to cook from memory, but to consult a trusted recipe book before lighting the stove. It’s a technique of pure orchestration: search, read, and then cook based on what has been read, along with their previous knowledge and experience.

And what does this have to do with AI? Think of it this way: a good cook has a general intuition about how to prepare almost anything (their base training), but when they enter a specialized fine-dining restaurant, they aren’t allowed to improvise. Every restaurant has its precise recipes developed through experience, detailing that a sauce must reduce for exactly 12 minutes, not 10 or 15, to give a rather silly example.

Well, an AI model might know a lot about general medicine because it “read” the entire internet during its training, but it doesn’t know your hospital’s specific protocols or the test results for that specific patient from yesterday afternoon. This is where another element of the kitchen’s mise en place comes into play: retrieval-augmented generation, or RAG.

From a technical standpoint, RAG solves two critical limitations of LLMs: hallucination and knowledge obsolescence. Models have knowledge frozen in their parameters (what they learned when they were trained). RAG allows that frozen brain to connect to a live database, a knowledge bank stored in a vector database.

The technical process follows a three-stage flow. First, during retrieval, when the user makes an inquiry (the prompt), it is entered into the embedding space and the system searches the vector database for the most semantically similar fragments of information, thus gathering the most relevant data regarding the query.

Next comes augmentation, where these retrieved fragments are “injected” into the prompt (meaning the query is augmented) and passed to the model together; it’s like telling the AI: “Using ONLY the following context [retrieved information], answer the question [user prompt].” Finally, in the generation phase, the LLM simply produces the response. By having the correct answer right under its nose within the context window, the probability of error or invention drops drastically, achieving a response firmly anchored in the evidence found in its knowledge base.

Without RAG, the chef would try to guess the dish based on what they know “in general.” With RAG, we force them to open the hospital filing cabinet, pull out the patient’s file and the current protocol, and cook the answer using exclusively those verified ingredients. We turn a generalist model into the ultimate specialist in our specific field.

However, even with a good vector database and a solid knowledge base, there will always be things the chef won’t know how to do on their own, like knowing what the weather is like outside, which might influence the type of dishes diners order for dinner. This is where they use external tools, like a digital thermometer or the weather app on their phone.

In this way, the chef calls the tool, gets the real (not invented) data, and keeps cooking. In AI technical jargon, this is called function calling, a call to functions that know how to execute the tool. The fundamental ones would be APIs connecting to the real world, which allow for updating the model’s knowledge (which is frozen at its training date); connections to technical databases that help answer very specific questions; and what we can call action tools, which aren’t just about reading, but about doing.

The model can connect to your calendar to see if you are free or even link to payment systems to finalize a reservation. It’s the difference between the chef explaining how a soufflé is made and him actually getting on with preheating the oven.

To finish with this section of the kitchen, we only need to talk about guardrails, a sort of health inspectors. Guardrails are the safety filters that ensure we aren’t serving spoiled food (toxic responses) or revealing the secret sauce recipe (leaks of protected data). Without this inspector watching the pass, no kitchen should be open to the public.

Haute cuisine

If we leave the daily menu behind and head up to the penthouse, we’ll find the experimental, avant-garde kitchen. Here, we no longer give step-by-step orders; here, we manage autonomy and specialization.

At this level, we encounter agents. We are no longer dealing with a kitchen hand who needs constant supervision, but with an autonomous chef to whom you give a goal, and she organize herself.

An agent’s operation is based on the ReAct (Reasoning + Acting) iterative cycle. The agent breaks down the objective into logical steps (reasons), executes specific actions like calling tools or APIs (acts), and analyzes the results obtained (observes). If the result doesn’t meet the success criteria, the agent adjusts its strategy and repeats the loop autonomously until it reaches the final solution.

The problem is that, occasionally, the goal can be too big a task for a single agent. In these cases, we can turn to the full brigade: multi-agent systems, the Michelin-star level of AI.

Technically speaking, we are talking about multi-agent orchestration architectures, systems where different specialized AI instances interact to solve complex tasks. For example, a “researcher” agent extracts data from primary sources, a “writer” agent structures the content, and a “critic” agent evaluates the draft’s coherence and checks for hallucinations. Through loops of collaboration and debate, these systems refine the final result autonomously, overcoming the limitations of a single, generalist LLM.

But there are times when even the best recipe book and the best team of sous-chefs aren’t enough. If we want the chef to do more than just follow instructions, if we want them to live and breathe their culinary style, we need the ultimate haute cuisine resource we’ll discuss today: fine-tuning. The goal is no longer for them to consult an external manual, but to send them on an intensive course to internalize techniques until they become part of their muscle memory, cooking dishes almost by instinct.

Returning to the field of AI, fine-tuning consists of taking a pre-trained base model and subjecting it to an additional phase of supervised learning, using a specific dataset from the domain of interest (such as proprietary clinical protocols or company codes).

Unlike RAG, which works on temporary memory (the context retrieved and added to the prompt), fine-tuning permanently modifies the weights and parameters of the neural network. This allows specific knowledge or behaviour to be locked directly into the model’s structure, achieving a level of specialization and efficiency that would be impossible to reach through prompts alone.

In the previous figure, you can see a schematic representation of these essential components for the functioning of AI tools.

We’re leaving…

And with that, we’re wrapping up this gastronomic tour. In this journey through the digital kitchen, we’ve broken down some of the most critical elements that power today’s AI tools, from the essential basic ingredients, like the prompts that start the order, the embeddings that define the flavour map, and the LLMs acting as head chefs, to the most sophisticated preparation techniques, such as the use of RAG and vector databases to manage memory, or the deployment of autonomous agents and fine-tuning to achieve culinary excellence.

It’s important to understand that the true magic of AI doesn’t lie in a single isolated ingredient, but in their perfect coordination. Imagine the process: when we launch a complex query, an autonomous agent often takes charge, rushes to the organized pantry of the vector database to retrieve the exact recipe via RAG, consults external tools to validate data in real-time, and only then lets the model (perhaps previously specialized through fine-tuning) cook the final response under the watchful eye of the health inspectors (guardrails). It is a technical choreography where memory, action, and safety come together to serve the perfect dish without the diner ever noticing the chaos of the kitchen.

And now, for real this time, we’re off. In this post, we’ve focused on agile response tools, but we’ve set aside everything regarding the new techniques of reasoning LLMs, which are useful when intuition isn’t enough and pure logic is required. These models are systems designed not to respond instantly; instead, they spend compute time to think, map out plans, and verify their own hypotheses internally before serving the result. But that is another story…