The choice of the statistical test.

The most suitable statistical test is described according to the type of variable, whether or not it is paired data and follow normal distribution. The most common situations will be those of comparison of means, comparison of proportions and calculations of correlation coefficients between two variables.

You will all know the case of someone who, after carrying out a study and collecting several million variables, addressed the statistician of his workplace and, demonstrating in a reliable way his clarity of ideas regarding his work, he said: please (You have to be educated), crosscheck everything with everything, to see what comes out.

At this point, several things can happen to you. If the statistician is an unscrupulous soulmate, he will give you a half smile and tell you to come back after a few days. Then, you will be provided with several hundred sheets with graphics, tables and numbers with which you will not know what to do. Another thing that can happen to you is to send to hell, tired as she will be to have similar requests made.

But you can be lucky and find a competent and patient statistician who, in a self-sacrificing way, will explain to you that the thing should not work like that. The logical thing is that you, before collecting any data, have prepared a report of the project in which it is planned, among other things, what is to be analyzed and what variables must be crossed between them. She can even suggest you that, if the analysis is not very complicated, you can try to do it yourself.

The latter may seem like the delirium of a mind disturbed by mathematics but, if you think about it for a moment, it is not such a bad idea. If we do the analysis, at least the preliminary, of our results, it can help us to better understand the study. Also, who can know what we want better than ourselves?

With the current statistical packages, the simplest bivariate statistics can be within our reach. We only have to be careful in choosing the right hypothesis contrast, for which we must take into account three aspects: the type of variables that we want to compare, if the data are paired or independent and if we have to use parametric or non-parametric tests. Let’s see these three aspects.

The choice of the statistical test

Regarding the type of variables, there are multiple denominations according to the classification or the statistical package that we use but, simplifying, we will say that there are three types of variables. First, there are the continuous variables. As the name suggests, they collect the value of a continuous variable such as weight, height, blood glucose concentration, etc. Second, there are the nominal variables, which consist of two or more categories that are mutually excluding. For example, the variable “hair color” can have the categories “brown”, “blonde” and “red hair”.

When these variables have two categories, we call them dichotomous (yes / no, alive / dead, etc.). Finally, when the categories are ordered by rank, we speak of ordinal variables: ” do not smoke “, ” smoke little “, ” smoke moderately “, ” smoke a lot “. Although they can sometimes use numbers, they indicate the position of the categories within the series, without implying, for example, that the distance from category 1 to 2 is the same as that from 2 to 3. For example, we can classify vesicoureteral reflux in grades I, II, III and IV (having a degree IV is more than a II, but it does not mean that you have twice as much reflux).

The type of variable

Knowing what kind of variable we are dealing with is simple. If we doubt, we can follow the following reasoning based on the answer to two questions:

- Does the variable have infinite theoretical values? Here we have to do a bit of abstraction and think about what “theoretical values” really means. For example, if we measure the weight of the subjects of the study, theoretical values will be infinite although, in practice, this will be limited by the precision of our scale. If the answer to this first question is “yes” we will be before a continuous variable. If it is not, we move on to the next question.

- Are the values sorted in some kind of rank? If the answer is “yes”, we will be dealing with an ordinal variable. If the answer is “no”, we will have a nominal variable.

Paired or independent data?

The second aspect is that of paired or independent measures. Two measures are paired when a variable is measured twice after having applied some change, usually in the same subject. For example: blood pressure before and after a stress test, weight before and after a nutritional intervention, etc. On the other hand, independent measures are those that are not related to each other (they are different variables): weight, height, gender, age, etc.

Parametric vs non-parametric

Finally, we mentioned the possibility of using parametric or non-parametric tests. We are not going to go into detail now, but in order to use a parametric test the variable must fulfill a series of characteristics, such as following a normal distribution, having a certain sample size, etc. In addition, there are techniques that are more robust than others when it comes to having to meet these conditions. When in doubt, it is preferable to use non-parametric techniques unnecessarily (the only problem is that it is more difficult to achieve statistical significance, but the contrast is just as valid) than using a parametric test when the necessary requirements are not met.

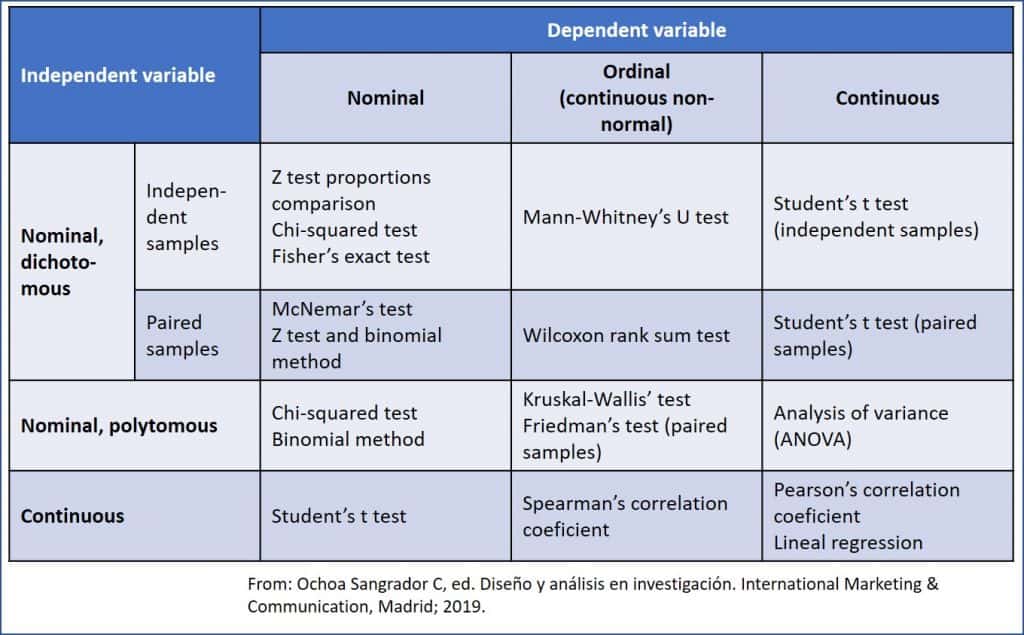

Once we have already answered these three aspects, we can only make the pairs of variables that we are going to compare and choose the appropriate statistical test. You can see it summarized in the attached table. The type of independent variable is represented in the rows, which is the one whose value does not depend on another variable (it is usually on the x axis of the graphic representations) and which is usually the one that we modified in the study to see the effect on another variable (the dependent). In the columns, on the other hand, we have the dependent variable, which is the one whose value is modified with the changes of the independent variable.

The type of independent variable is represented in the rows, which is the one whose value does not depend on another variable (it is usually on the x axis of the graphic representations) and which is usually the one that we modified in the study to see the effect on another variable (the dependent). In the columns, on the other hand, we have the dependent variable, which is the one whose value is modified with the changes of the independent variable.

Anyway, do get muddled: the statistical software will make the hypothesis contrast without taking into account which is the dependent and which the independent, only taking into account the types of variables.

The table is self-explanatory, so we will not give it much time. For example, if we have measured blood pressure (contiuous variable) and we want to know if there are differences between men and women (gender, nominal dichotomous variable), the appropriate test will be Student’s t test for independent samples. If we wanted to see if there is a difference in pressure before and after a treatment, we would use the same Student’s t test but for paired samples.

Another example: if we want to know if there are significant differences in the color of hair (nominal, polytomous: “blond”, “brown” and “redhead) and if the participant is from the north or south of Europe (nominal, dichotomous), we could use a Chi-square’s test.

We’re leaving…

And here we will end for today. We have not talked about the peculiarities of each test that we have to take into account, but we have only mentioned the test itself. For example, the chi-square’s has to meet minimums in each box of the contingency table, in the case of Student’s t we must consider whether the variances are equal (homoscedasticity) or not, etc. But that is another story…