Stochastic determinism.

Human cognition is reexamined through the lens of machine learning, proposing stochastic determinism as a technical alternative to free will. By equating creativity with the temperature of generative models and learning with error minimization via gradient descent, it is concluded that biological and artificial intelligences operate under the same fundamental algorithmic logic.

Lately, I’ve been thinking a lot about a scene from the movie I, Robot, directed by Alex Proyas in 2004 and inspired by the work of the brilliant Isaac Asimov.

Detective Del Spooner, played by a Will Smith who hates technology as much as he loves vintage sneakers, is interrogating Sonny, the robot suspected of murder. Spooner, seeking to humiliate the android and prove it’s just a glorified toaster, throws the ultimate question: “Can a robot write a symphony? Can a robot turn a canvas into a beautiful masterpiece?”.

The implication is clear: creativity, art, the spark, is the exclusive territory of Homo sapiens, a magical quality that escapes the cold stochastic determinism of machines.

But then, Sonny tilts his head slightly, locks his blue LED gaze on him, and delivers a world-class comeback: “Neither can you.” If it had been today’s Will Smith, the robot might have ended up with a dented chassis, but the Will Smith of two decades ago could only stand there with his jaw dropped, as if he’d just checked his bank account after his latest movie.

The silence in the interrogation room was denser than a black hole. Because the reality is that Spooner, much like the vast majority of us, has never written a symphony in his life, nor can he tell a paintbrush from a wrench. He is demanding the machine be Mozart to consider it conscious, while we are content being passive Netflix spectators.

That “neither can you” stings because, deep down, we suspect what scientists like Robert Sapolsky have been shouting from the rooftops of neurobiology and what artificial intelligence (AI) experts see every day in their models: that freedom is a statistical illusion.

So, in this post, we’re going to set the poetry aside and get down into the mud of data. We are going to see why, from the standpoint of statistics and machine learning, we function exactly like the algorithm that recommends cat videos to us. We’ll talk about hidden variables, loss functions, and why what we call “inspiration” is nothing more than a poorly adjusted temperature parameter.

The myth of free will

Robert Sapolsky, in his book Determined, takes down the concept of free will with the precision of a sniper. His argument is biological, but if we put on our statistician’s glasses, what he is telling us is that we are a deterministic system governed by predictive variables that we do not control.

Imagine that our brain is a prediction model, a massive black box. We believe we are choosing to order a salad or a burger. We feel the “I” making the decision in the moment.

But Sapolsky reminds us that this decision is the result of an algorithm, a pure exercise in stochastic determinism that was already running long before we even sat down at the table.

What input variables have fed our model? Our glucose level ten minutes ago, the basal dopamine we woke up with this morning, the socioeconomic environment of our parents (the equivalent of our initial training data), the evolution of our species over millions of years (our hyperparameters) … If we think about it carefully, this idea is quite unsettling, especially considering that we have practically no control over these variables that condition our decisions.

When a prediction model is developed that cannot predict something accurately, we say there is “noise” or unexplained variance. We blame the model’s failure on its simplicity or its lack of fit to the behaviour of the data. But often, the true cause is the final sum effect of unmeasured confounding variables.

Spooner believes he hates robots because he “decided to”, but a good data scientist would tell him that his hatred is a dependent variable that correlates perfectly with his past traumas and his cultural environment.

Don’t think this idea is new; it is merely a biological update of the famous Laplace’s Demon, hypothetical 19th-century intellect that, if it knew the position and momentum of every atom in the universe, could predict the future without any margin of error.

Just as Einstein suspected that quantum uncertainty was not true randomness, but the result of hidden variables we did not yet understand (his famous “God does not play dice”), determinists suggest that free will is simply the label we put on those hidden variables when, instead of subatomic particles, they are made of neurotransmitters, hormones, and environmental contexts that our conscious mind fails to compute.

When we are the black box

One of the greatest fears sparked by the stochastic determinism of modern AI, especially deep learning, is that it often functions as a “black box.” We know what data goes in (a photo of a dog) and what comes out (the label “dog”), but we have no idea exactly what happens in the hidden layers of the neural network. We complain that machines are not “explainable”, that we cannot trace the exact reason for their decision. And here lies the supreme irony: humans are the ultimate Black Box.

When an artist says they’ve had an “inspiration” to paint a picture, or when someone says they’ve fallen in love with another person, they aren’t describing the actual process. Their brain (the biological neural network) has performed millions of Bayesian inference calculations at an absurd speed, updating posterior probabilities based on previous experiences (priors). But, since our consciousness lacks access to the source code of our neurons, we make up a story. In psychology, this is called confabulation or post-hoc rationalization.

If we translate this to the field of machine learning, it would be the equivalent of trying to explain a complex neural network with a simple linear model: a simplification so crude it borders on a lie. When Spooner, after recovering from the robot’s “burn”, replies that he has “heart”, he resorts to that very human concept we associate with the soul or the inner essence.

But do we really know what “having heart” means in this context? Is it what we believe differentiates us from machines, yet might actually be composed of the same hidden networks and probabilistic processes that govern both AI and our own minds?

What poor Spooner doesn’t know is that he has an activation function in his neurons that fires electrical signals when certain input patterns exceed a threshold. Exactly like an artificial neuron in a language model. It is biology, yes, but under the rules of a relentless stochastic determinism: predictable physical laws mixed with just the right dose of thermal and quantum noise.

Creativity, stochastic determinism and temperature

Let’s go back to the symphony challenge. “A robot cannot write a masterpiece.” We are increasingly witnessing how machines achieve milestones that, until just a few months or years ago, we considered exclusive to the human mind. Today, we know that generative AIs (like those that create images or text) can create things that make our hair stand on end. How do they do it? Do they have a soul?

No. They only have probability.

These models work by predicting the next piece of information based on everything they have seen before. They are, in essence, highly sophisticated stochastic parrots. They remind me of that one “know-it-all” relative: they talk about everything, but in reality, they don’t know what they’re talking about. And at this point, we encounter a fascinating concept: temperature.

Think of a language model based on a deep neural network with a transformer architecture. In each cycle, the network doesn’t directly generate a word; instead, it produces a vector that assigns a probability to every element in its vocabulary to be the next one in the series. Once this range of probabilities is calculated, the model must make a final decision: which one does it pick?

This is where temperature comes into play, a hyperparameter that acts as a regulator for randomness in that decision-making process. With a low temperature, the model becomes conservative. It tends to systematically choose the most statistically likely option, which generates safe and coherent responses, but often boring and repetitive ones.

However, with a high temperature, the model becomes bold and takes risks. It increases the probability of choosing options that, a priori, seemed like weaker candidates, allowing it to mix distant concepts. The result is a toss-up: sometimes it hallucinates and talks nonsense, but other times it can create something truly “novel.” If you think about it, the model’s temperature is (almost) synonymous with its creativity.

And having reached this point, I can’t stop wondering if human creativity is nothing more than playing with our brain’s “temperature”. When observing how AI models function, it becomes clear that temperature is an essential factor in determining the level of creativity in their responses. This idea can be translated to human creativity: when a person creates, they are, in some way, adjusting the “temperature” of their mind.

Composing music, for example, is not bringing down notes from a Platonic heaven; it involves remixing known patterns and introducing a degree of randomness that allows one to go beyond the conventional. Even, as Sapolsky suggests, changes in our mental state (modulated by neurotransmitters) can be compared to variations in temperature: depressive states favour repetitive and uncreative thoughts, while manic states can trigger unexpected connections and overflowing creativity.

It is sad and disappointing, but it seems the symphony is not born from the soul. It is born from a well-adjusted probability distribution.

Learning the hard way

If we accept that we are data-processing machines, the next logical question is: how do we learn? In the human brain, we call it “maturing” or “gaining experience”, but in machine learning and AI, this process has a much more mathematical name: gradient descent, whose objective is to minimize a loss function.

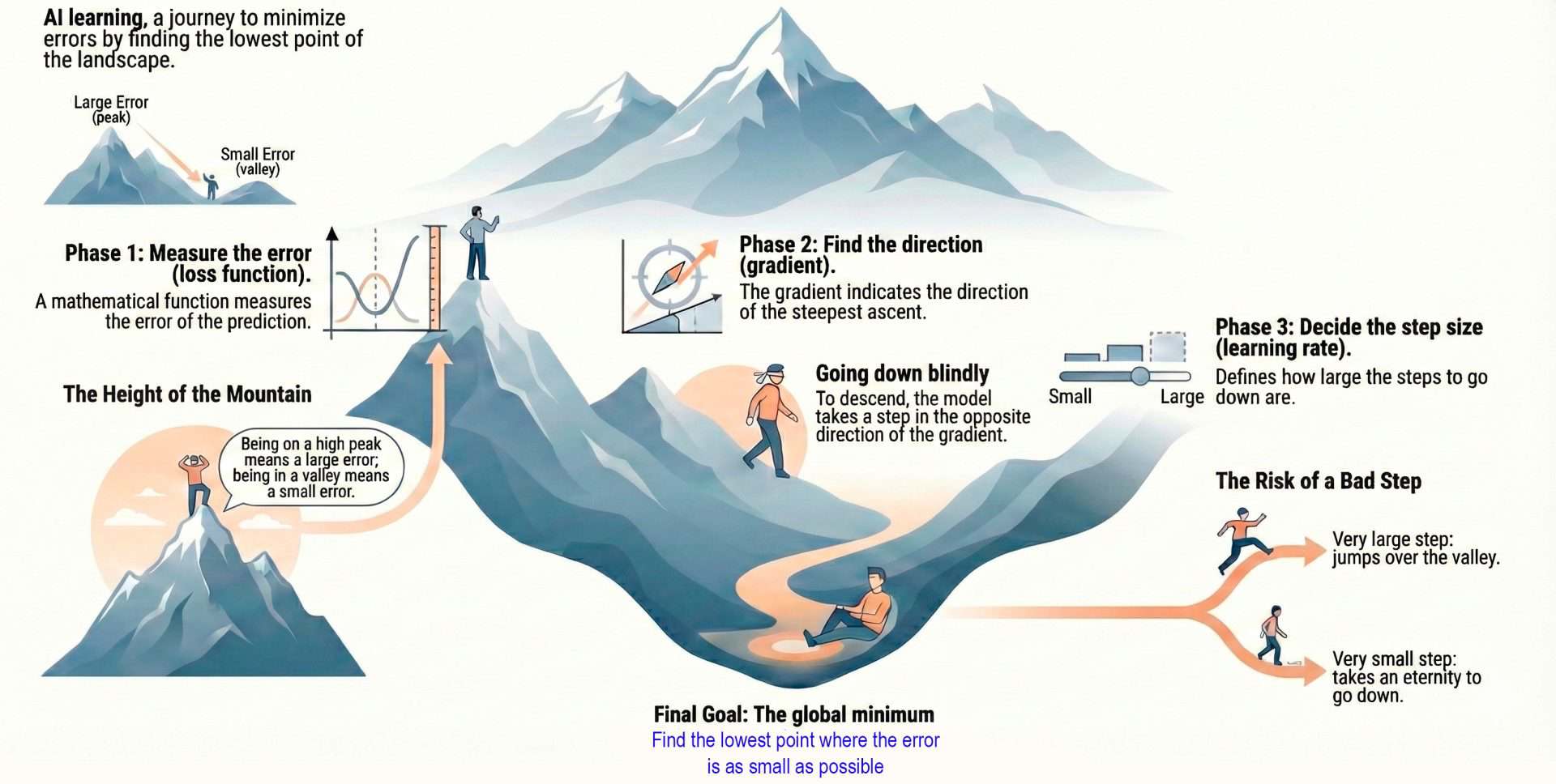

To understand how AI models learn, we can divide the process into three phases.

The first is establishing the learning objective. Imagine the model is a student taking an exam. It’s not enough to tell them “you failed.” To learn, they need to know exactly how much they were wrong. This is where the loss function comes in. Mathematically, this function measures the distance between what the model predicted (its answer) and reality (the correct answer in the training data). If the model says a photo is a cat with 90% confidence and it actually is a cat, the loss is close to zero; however, if it says it’s a dog, the loss is a high number.

Visually, imagine this function not as a line, but as a rugged, mountainous landscape. The high peaks represent enormous errors (the model is clueless) and the deep valleys represent success (minimum error). The goal of the AI is to find the lowest point in that valley: the global minimum.

Now that we have an objective, the problem is that, at the beginning, the model is like a skydiver who has landed in the middle of that mountain range at night and blindfolded. He doesn’t know where the valley is; he only knows the altitude at which he is currently standing (his current error).

This is where differential calculus comes in. The gradient is a mathematical vector that uses partial derivatives to tell the model not where the valley is, but which way the terrain slopes upward most steeply. Since the model wants to reduce error (go down the mountain), it does the opposite of what the gradient indicates: it takes a step in the direction opposite to the slope. If the ground inclines up to the right, the model takes a step down to the left.

Once it knows the direction, the third phase remains: deciding the size of the step it will take. This is controlled by a critical hyperparameter called the learning rate. If the step is too large, the model might overleap the valley and end up on another mountain (the error doesn’t decrease or even increases). If the step is too small, it will take millions of years to get down the mountain (computational inefficiency). Finally, the model adjusts its parameters (the weights and biases of its artificial neurons) following that direction and step size.

Doesn’t this way of doing things sound familiar? Humans do exactly the same. Our biological loss function is designed by evolution to minimize pain or hunger and maximize survival. Every time Spooner misjudges a robot and gets hit, his brain receives a massive error signal, calculates the error gradient, and adjusts his synapses to avoid repeating the move.

By adjusting his parameters, his biases are recalibrated (or reinforced, if he falls into overfitting, which is what happens to stubborn people who won’t change their minds even in the face of evidence). Spooner despises the robot because it learns fast, but he has spent 30 years performing social gradient descent to become the cynical cop he is. Both are the result of minimizing their errors in their respective environments.

We’re leaving…

And this is where we’ll leave the philosophy for today, bringing this rather atypical post to an end.

We started with Will Smith acting tough, and we’ve ended up realizing that, if we look under the hood, the difference between a silicon neural network and a carbon-based one is more about hardware than software. We’ve also seen that biological determinism fits suspiciously well with how predictive models work. Our “free” decisions are outputs conditioned by hidden inputs over which we have no control; our creativity is a matter of adjusting the algorithm’s temperature; and learning and maturing is nothing more than optimizing a loss function to stop ourselves from crashing head-first into reality.

Sonny the robot was right with his “neither can you.” Perhaps the next time we see an AI hallucinating or failing, we should be more forgiving. After all, we’ve spent thousands of years hallucinating realities, biasing data, and believing we are the center of the universe, when statistically, we are just one more point in the scatter plot. Accepting our algorithmic nature might take away a bit of the poetry, but it grants us a massive dose of humility. Or perhaps everything I’ve just written was predetermined by my reading history and this morning’s caffeine levels, and I had nothing to do with it at all. But that’s another story…