Publication bias.

There is publication bias when not all the relevant information on a topic is published. The methods available to detect it are described.

Achilles. What a man!. Definitely, one of the main characters among those who were in that mess that was ensued in Troy because of Helena, a.k.a. the beauty. You know his story. In order to make him invulnerable his mother, who was none other than the nymph Tetis, bathed him in ambrosia and submerged him in the River Stix. But she made a mistake that should not have been allowed to any nymph: she took him by his right heel, which did not get wet with the river’s water. And so, his heel became his only vulnerable part. Hector didn’t realize it in time but Paris, far savvier, put an arrow in Achilles’ heel and sent him back to the Stix, but not into the water, but rather to the other side. Without Charon the Boatman.

This is the source of the expression Achilles’s heel, a metaphor for a vulnerable spot of someone or something that is otherwise usually known for their strength.

Publication bias

As an example, something as robust and formidable as a meta-analysis has its Achilles’s heel: publication bias. And that’s because in the world of science there is no social justice.

All scientific works should have the same opportunities to be published and become famous, but that is far from reality and those works can be discriminated against for four reasons: statistical significance, popularity of its topic, having someone who sponsors them and the language they are written.

The truth is that papers with statistically significant results are more likely to be published than those with non-significant ones. Moreover, even if accepted, the former are likely to be published before and, more often, in English written journals, which are more prestigious and have more diffusion. As a result, those papers are cited more frequently. And the same goes for papers with “positive” results versus those with “negative” results.

Similarly, papers about issues of public interest are more likely to be published regardless of the significance of their results. In addition, the sponsor also has its influence: a company that finances a study about one of its products is not going to be prone to publish the results if they are against the utility of the product concerned. And finally, English written papers have more diffusion than those written in other languages.

All of these can be worsened by the fact that these same factors may influence the choice of inclusion and exclusion criteria for primary studies in the meta-analysis, so we may get a sample of papers that may not be representative of global knowledge about the topic addressed in the systematic review and meta-analysis.

If there’s publication bias the applicability of results will be seriously compromised. This is why we say that publication bias is meta-analysis Achilles’s heel.

If we choose inclusion and exclusion criteria correctly and we do a global literature search without restrictions, we will have done our best to minimize the risk of bias, but we can never be sure of having prevented it. Therefore, there’re some techniques and tools that have been developed for publication bias detection.

Funnel plot

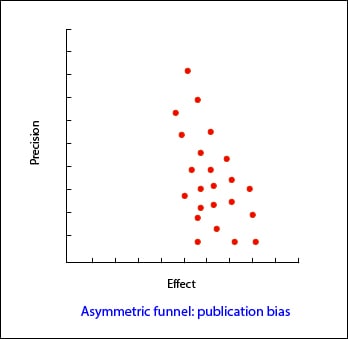

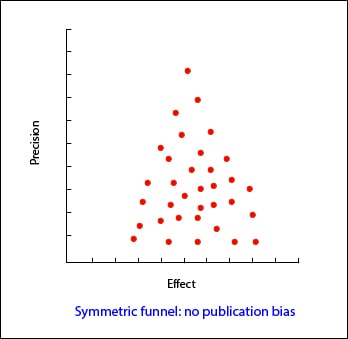

The most widely used is a tool known by its friendly name: funnel plot. It represents the magnitude of the effect measured (X axis) versus a precision measure (Y axis), which is usually the sample size, but may also be the inverse of the variance or the standard error. Each primary study is represented with a dot and we only have to observe the dot cloud shape.

In its most common form, with the sample size represented on Y axis, the precision of results is higher for larger sample studies, so dots are closer to each other at the top of the plot and are increasingly scattered toward the bottom of the plot, near the origin of Y axis. Thus, the cloud has a funnel shape, with the wide part downwards. The shape should be symmetrical and, if not, we must always suspect the existence of publication bias. In the second example that I show, you can see how there’re “missing” studies in the side of lack of effect: this may mean that published studies have been only those with positive results.

Numeric methods

This approach is very simple to use but, sometimes, we may have doubts about the funnel asymmetry, especially if the number of studies is small. In addition, the funnel may be asymmetric due to a deficient quality of studies or because we are dealing with interventions whose effect varies with the sample of each study. For these situations, other methods have been devised that are more objective, such as Begg’s rank correlation test and Egger’s linear regression.

Begg’s test examines the presence of association among the effect estimates and their variances. If there’s correlation among them, it is a bad thing. The problem with this test is that it is underpowered, so it’s unreliable when the number of primary studies is small.

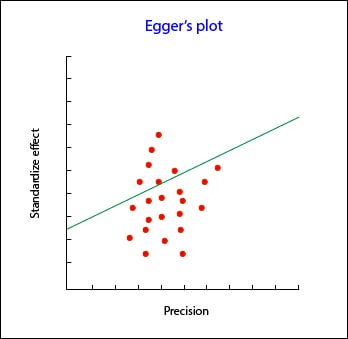

Egger’s test is more specific than Begg’s. This tool plots the regression line between precision of the studies (independent variable) and the standardized effect (dependent variable). This regression line must be weighed by the inverse of variance, so I do not recommend you to do it on your own unless you are a consummated statistic. When there isn’t publication bias the regression line originates in the Y-axis zero. So much further away from zero, further evidence of publication bias.

Egger’s test is more specific than Begg’s. This tool plots the regression line between precision of the studies (independent variable) and the standardized effect (dependent variable). This regression line must be weighed by the inverse of variance, so I do not recommend you to do it on your own unless you are a consummated statistic. When there isn’t publication bias the regression line originates in the Y-axis zero. So much further away from zero, further evidence of publication bias.

As always, there are computer programs available to make these tests quickly without us having to get our brains fried with their calculations.

Trim and fill

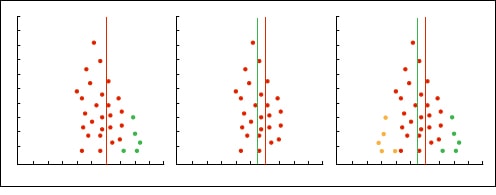

And what if after doing all the work we find that there is publication bias? Can we do anything to adjust it?. As always, we can.

The easiest way is to use a graphical technique that is called trim and fill adjustment. It works as follows: a) draw the funnel plot, b) remove small studies that make the funnel asymmetric, c) recalculate the new center of the graph, d) put back removed studies and add their reflections at the other side of the middle line of the cloud, e) re-estimate the effect.

We’re leaving…

And finally, only say that there’s a second method that is much more accurate but also much more complex, which consists of a regression model based on the Egger’s test. But that’s another story…